%%HTML

<script src="require.js"></script>

from IPython.display import display, HTML, clear_output

import ipywidgets as widgets

HTML('''<script src="https://cdnjs.cloudflare.com/ajax/libs/jquery/2.0.3/jquery.min.js "></script><script>

code_show=true;

function code_toggle() {

if (code_show){

$('div.jp-CodeCell > div.jp-Cell-inputWrapper').hide();

} else {

$('div.jp-CodeCell > div.jp-Cell-inputWrapper').show();

}

code_show = !code_show

}

$( document ).ready(code_toggle);</script><form action="javascript:code_toggle()"><input type="submit" value="Click here to toggle on/off the raw code."></form>

''')

In the era of abundant streaming services, finding similar movies after watching one poses a challenge. Relying solely on genre for recommendations may disappoint users, as it overlooks nuanced, less straightforward, and less obvious features, potentially suggesting irrelevant films despite sharing a genre. Hence, using all the features of movie as a query instead, which is the underpinning of an Information Retrieval System for movies, a type of movie recommender system, would help in addressing the lack of nuance provided by solely genre-based movie recommenders. With respect to this, our study aims to explore what potential characteristics, features, and parameters makes an Information Retrieval System, which is based on a movie dataset, effective.

The team processed a dataset comprising 128 movies scraped from Rotten Tomatoes, employing techniques like one-hot encoding, TFIDF-normalization, and Min-Max Normalization for data cleaning. Within this dataset, Drama emerged as the most frequent genre, and English was the most commonly used language. The movie list was purposely limited in order to make evaluating the effectiveness of the Information Retrieval systems feasible.

Two Information Retrieval systems were then created with different "optimized" vectorizer settings (which included stemming, word normalization, setting the maximum and minimum document frequency requirements, and such) and with default vectorizer settings. These were further divided by distance measures which included Euclidean, Manhattan, and Cosine distance measures.

In evaluating the variants, the most optimal k parameter was determined using a Precision-Recall vs. k graph, informing Average Precision, Average Recall, and Average F-1 score at k. Additional metrics encompassed Mean Average Precision, R-Precision, and Eleven-Point Precision-Recall Curve. Key findings indicated that the default setting is optimal (1), cosine distance is the preferred measure (2), larger datasets lead to higher metrics (3), and a k of 5 is roughly the optimal number of results (4). Moreover, analyzing the R-Precision of each query, it was found that increasing the number of queries would account for coincidentally well-representative queries and less-representative queries in the queries, thereby removing the possible skew in the metrics, and that increasing the data points lead to higher metrics due to having more chances of acquiring salient features for a given characteristic of the data points.

Recommendations for future studies include increasing the size of the dataset (1), creating hypotheses for each change in setting (2), increasing the number of test queries (3), improving the gold standard by outsourcing external "movie watchers" (4), and comparing cosine distance with other distance measures not studied in this report (5). This would allow for a more extensive exploration and discussion of the interplay among the characteristics of the Information Retrieval System, the characteristics of its dataset, and the entertainment domain, through which the most optimal settings are found for movie recommender systems and through which a better movie recommender system is created.

Import Libraries

The following libraries and functions were imported:

- Pandas for managing dataframes and tabular data

- Requests for acquiring access to the relevant Rotten Tomatoes webpages

- Beautiful Soup 4 for scraping the Rotten Tomatoes webpages

- Regular Expression for utilizing patterns with the HTML script during scraping

- OS for managing downloaded webpages from Rotten Tomatoes

- SQLite3 for accessing and querying created databases

- sklearn's CountVectorizer for vectorizing document columns

- nltk's EnglishStemmer for stemming the words in the documents

- sklearn's TfidfTransformer for normalizing the token frequencies into TF-IDF

- matplotlib's pyplot for plotting some of the relevant visualizations in the paper

- Seaborn for plotting the other relevant visualizations in the paper

- sklearn's MinMaxScaler for scaling the continuous variables

- Warnings for hiding the warnings and cleaning the notebook

- scipy's distance module for measuring distance metrics

- scipy's trapz for calculating the area under the curve

- Numpy for faster manipulation of matrices

- IPython for displaying purposes

- ipywidgets for creating interactive widgets

import pandas as pd

import bs4

import requests

import re

import os

import sqlite3

from sklearn.feature_extraction.text import CountVectorizer

from nltk.stem.snowball import EnglishStemmer

from sklearn.feature_extraction.text import TfidfTransformer

from matplotlib import pyplot as plt

from sklearn.preprocessing import MinMaxScaler

import numpy as np

from scipy.spatial.distance import euclidean, cityblock, cosine

from scipy.integrate import trapz

import warnings

import seaborn as sns

Define Functions

The following functions were defined:

- get_info for getting the information for each movie, coming from an HTML file

- get_all_movie_info for creating a dataframe for the movies' details

- one_hot_encode for one-hot encoding all categorical columns

- movie_sql for creating and updating a database from the collected and processed data

- load_sql for converting the table in the database to a dataframe

- sort_idf for acquiring the idf of each token and collecting additional potential stopwords

- preprocessor for preprocessing the documents

- bag_of_words for converting documents into bag-of-words

- normalize_tfidf for normalizing bag-of-word vectors to TFIDF

- nearest_k for returning the results of the IR

- kappa for calculating the kappa statistic and presenting the relevance matrix

- pk for calculating the Precision @ k

- a_pk for calculating the Average (Precision @ K)

- rk for calculating the Recall @ k

- a_rk for calculating the Average (Recall @ k)

- apk for calculating the Average Precision @ k

- mapk for calculating the Mean Average Precision @ k

- mf_onek for calculating the Mean F-1 Score @ k

- rprec for calculating the individual R-Precisions

- ave_rprec for calculating the Average R-Precision

- ave_prg for plotting the 11-point Precision/Recall graph

- pretty_print for printing dataframes more cleanly

- recommend for recommeding movie titles based on a title

- search_k_title for listing all relevant movies based on k and title

def get_info(html_file):

"""Get the information for each movie, which comes from an html file.

Parameters

-------

html_file : str

Name of html file

Returns

-------

mydict : dict

Dictionary which contains all features of a movie

"""

with open(html_file) as f:

html_content = f.read()

soup = bs4.BeautifulSoup(html_content)

title = soup.head.title.text.replace(' - Rotten Tomatoes', '')

synopsis = soup.find('p', {'data-qa': 'movie-info-synopsis'}).text

genre_raw = soup.find('span', {'class': 'genre'}).text

genre = genre_raw.replace('\n', '').replace(' ', '').split(',')

runtime_raw = soup.find('b', string='Runtime:').find_parent() \

.find('span').find('time').text.strip()

match = re.match(r'(\d+)h (\d+)m', runtime_raw)

runtime = int(match.group(1)) * 60 + int(match.group(2)) if match else 0

language = soup.find('b', string='Original Language:') \

.find_parent().find('span').text

top_critics = soup.find_all(

'review-speech-balloon-deprecated',

{'istopcritic': 'true'}

)

critic = '\n\n'.join(tc.get('reviewquote', '') for tc in top_critics)

mydict = {

'title': title,

'synopsis': synopsis,

'genre': genre,

'runtime': runtime,

'language': language,

'critic': critic

}

return mydict

def get_all_movie_info():

"""Create a dataframe for the movies' details.

Returns

-------

df

Data Frame containing all the movies' details

"""

movie_list = []

folder_path = "movies"

for filename in os.listdir(folder_path):

file_path = os.path.join(folder_path, filename)

if os.path.isfile(file_path):

movie_list.append(get_info(file_path))

print('done with ', file_path)

return pd.DataFrame(movie_list)

def one_hot_encode(movies):

"""For categorical columns, such as genre and language, one-hot-encode

them. And return the new dataframe with one-hot-encoded categorical

variables.

Parameters

-------

movies : df

Data Frame containing all the movies and their features

Returns

-------

movies_encoded : df

Data Frame where the categorical features from the original Data Frame

are one-hot encoded

"""

genre_dummies = pd.get_dummies(

movies['genre'].apply(pd.Series).stack()).groupby(level=0).sum()

genre_dummies = genre_dummies.add_prefix('g_')

language_dummies = pd.get_dummies(

movies['language'], dtype='int').add_prefix('lang_')

movies_encoded = pd.concat([movies, genre_dummies, language_dummies],

axis=1)

movies_encoded.drop(['genre', 'language'], axis=1, inplace=True)

movies_encoded.columns = movies_encoded.columns.str.lower()

return movies_encoded

def movie_sql(df):

"""Create a table from a Data Frame which will be placed inside a

SQLite3 database

Parameters

-------

df : df

Data Frame containing all the movies and their features

"""

db_file = 'rotten_tomatoes.db'

conn = sqlite3.connect(db_file)

df.to_sql('movie_encoded', conn, index=False, if_exists='replace',)

conn.close()

def load_sql(db='rotten_tomatoes.db'):

"""Return the Data Frame from the table in the SQLite3 database

Parameters

-------

db : str, optional

Name of the SQLite3 database

Returns

-------

df : df

Data Frame which was loaded from the SQLite3 database

"""

conn = sqlite3.connect(db)

cursor = conn.cursor()

df = pd.read_sql("SELECT * FROM movie_encoded", conn)

cursor.close()

conn.close()

return df

def sort_idf(df, cv):

"""Calculate the idfs for each movie and inserting them into a Data Frame,

sort them by ascending order and return the Data Frame.

Parameters

-------

df : df

Data Frame containing all the movies and their features

cv : CountVectorizer

Fitted CountVectorizer containing the feature names

Returns

-------

df_idf : df

Data Frame containing the sorted idfs

"""

tfidf_transformer = TfidfTransformer(smooth_idf=True, use_idf=True)

tfidf_transformer.fit(df)

df_idf = pd.DataFrame(tfidf_transformer.idf_,

index=cv.get_feature_names_out(),

columns=["idf_weights"])

df_idf = df_idf.sort_values(by='idf_weights')

return df_idf

def preprocessor(text):

"""Return a preprocessed document which is stemmed, lowercased, and removed of

special character and where some words are normalized to a similar

word `_connector_`.

Parameters

-------

text : str

Document

Returns

-------

str

Preprocessed document

"""

english_stemmer = EnglishStemmer()

text = text.lower()

# remove special chars

text = re.sub(r"\W", " ", text)

# normalize certain words

text = re.sub(r"\s+(in|the|all|for|and|on)\s+", " _connector_ ", text)

# stem words

words = re.split("\\s+",text)

stemmed_words = [english_stemmer.stem(word=word) for word in words]

return ' '.join(stemmed_words)

def bag_of_words(srs, mode, stop_extend=None):

"""Return the vectorized document, whose preprocessing settings differ

based on the mode and for the "optimal" preprocessing, whose stop words

may be extended by `stop_extend`.

Parameters

-------

srs : Series

Series containing the document

mode : str

Default or `Optimal`

stop_extend : list, optional

List of extra stop words

Returns

-------

vectorizer : CountVectorizer

Fitted CountVectorizer

bowmatrix : array-like

Bag-of-word matrix

"""

with open('minimal-stop.txt', 'r') as stop:

lst_temp = stop.readlines()

lst_stop = [i.replace('\n', '') for i in lst_temp]

if stop_extend is not None:

lst_stop.extend(stop_extend)

if mode == 'default':

vectorizer = CountVectorizer()

bowmatrix = vectorizer.fit_transform(srs)

stop.close()

return vectorizer, bowmatrix

else:

vectorizer = CountVectorizer(preprocessor=preprocessor,

stop_words=lst_stop,

ngram_range=(1, 2),

max_df=0.9,

min_df=0.1,)

bowmatrix = vectorizer.fit_transform(srs)

stop.close()

return vectorizer, bowmatrix

def normalize_tfidf(df):

"""Normalize the frequencies of each token column by its TF-IDF.

Parameters

-------

df : df

Data Frame containing the bag-of-word matrix

Returns

-------

tfidf_transformer : TfidfTransformer

Fitted transformer for TF-IDF

df_idf : df

Data Frame containing the TF-IDF normalized matrix

"""

tfidf_transformer = TfidfTransformer(smooth_idf=True, use_idf=True)

df_idf = tfidf_transformer.fit_transform(df)

return tfidf_transformer, df_idf

def nearest_k(query, objects, k, dist):

"""Return the indices to objects most similar to query

Parameters

----------

query : ndarray

query object represented in the same form vector representation as the

objects

objects : ndarray

vector-represented objects in the database; rows correspond to

objects, columns correspond to features

k : int

number of most similar objects to return

dist : function

accepts two ndarrays as parameters then returns their distance

Returns

-------

most_similar : ndarray

Indices to the most similar objects in the database

"""

return np.argsort([dist(query, obj) for obj in objects], kind="stable")[:k]

def kappa(df1, df2, name1, name2):

"""Compute the kappa statistic for a given pair of judges and return both

the kappa statistic and relevance matrix

Parameters

----------

df1 : df

Data Frame containing the first judge's votes

df2 : df

Data Frame containing the second judge's votes

name1 : str

Name of first judge

name2 : str

Name of second judge

Returns

-------

kappa : float

Kappa statistic

rel_mat : df

Relevance matrix

"""

yes_yes = 0

yes_no = 0

no_yes = 0

no_no = 0

df1 = df1.notnull().astype('int')

df2 = df2.notnull().astype('int')

for (index1, row1), (index2, row2) in zip(df1.iterrows(), df2.iterrows()):

for column in df1.columns:

if row1[column] == 1 and row1[column] == row2[column]:

yes_yes += 1

elif row1[column] > row2[column]:

yes_no += 1

elif row1[column] < row2[column]:

no_yes += 1

elif row1[column] == 0 and row1[column] == row2[column]:

no_no += 1

yes_tup1 = (name1, 'Yes')

yes_tup2 = (name2, 'Yes')

no_tup1 = (name1, 'No')

no_tup2 = (name2, 'No')

total_tup1 = (name1, 'Total')

total_tup2 = (name2, 'Total')

index = pd.MultiIndex.from_tuples([yes_tup1, no_tup1, total_tup1])

cols = pd.MultiIndex.from_tuples([yes_tup2, no_tup2, total_tup2])

rel_mat = pd.DataFrame([[yes_yes, yes_no, yes_yes + yes_no],

[no_yes, no_no, no_yes + no_no],

[yes_yes + no_yes, yes_no + no_no, (

yes_yes + yes_no + no_yes + no_no)]],

columns=cols,

index=index)

# Getting kappa statistic

total = yes_yes + no_no + yes_no + no_yes

p_a = (yes_yes + no_no) / total

p_nonrelevant = (yes_no + no_no + no_yes + no_no) / (total * 2)

p_relevant = (yes_yes + yes_no + yes_yes + no_yes) / (total *2)

p_e = (p_nonrelevant ** 2) + (p_relevant ** 2)

kappa = (p_a - p_e) / (1 - p_e)

return kappa, rel_mat

def pk(df, y_test, y_pred, k=5):

"""Compute precision @ k for an input boolean dataframe

Parameters

----------

df : df

Data Frame containing boolean columns y_text and y_pred

y_test : str

Name of column containing actual relevance in binary where 0 is

irrelevant and 1 is relevant

y_pred : str

Name of column containing ones since the returned results are

essentially considered as relevant by the system

k : int, optional

Integer number of items to consider

Returns

-------

float

Number of precision value for k items

"""

# extract the k rows

dfK = df.head(k)

# compute number of recommended items @ k

denominator = dfK[y_pred].sum()

# compute number of recommended items that are relevant @ k

numerator = dfK[y_test].sum()

# return result

if denominator > 0:

return numerator/denominator

else:

return None

def rk(df, y_test, y_pred, k=5):

"""Compute recall @ k for an input boolean dataframe

Parameters

----------

df : df

Data Frame containing boolean columns y_text and y_pred

y_test : str

Name of column containing actual relevance in binary where 0 is

irrelevant and 1 is relevant

y_pred : str

Name of column containing ones since the returned results are

essentially considered as relevant by the system

k : int, optional

Integer number of items to consider

Returns

-------

float

Number of recall value for k items

"""

# extract the k rows

dfK = df.head(k)

# compute number of all relevant items

denominator = df[y_test].sum()

# compute number of recommended items that are relevant @ k

numerator = dfK[y_test].sum()

# return result

if denominator > 0:

return numerator/denominator

else:

return None

def a_pk(lst_pk):

"""Compute average (precision @ k)

Parameters

----------

lst_pk : list

List of precision values @ k

Returns

-------

float

Average (Precision @ k)

"""

return np.mean(lst_pk)

def a_rk(lst_rk):

"""Compute average (recall @ k)

Parameters

----------

lst_pk : list

List of recall values @ k

Returns

-------

float

Average (Recall @ k)

"""

return np.mean(lst_rk)

def apk(actual, predicted, k=10):

"""

Compute the average precision at k. Compute the average precision at k

between two lists of items.

Parameters

----------

actual : list

A list of elements that are to be predicted (order doesn't matter)

predicted : list

A list of predicted elements (order does matter)

k : int, optional

The maximum number of predicted elements

Returns

-------

score : double

The average precision at k over the input lists

"""

if not actual:

return 0.0

if len(predicted) > k:

predicted = predicted[:k]

score = 0.0

num_hits = 0.0

for i, p in enumerate(predicted):

# first condition checks whether it is valid prediction

# second condition checks if prediction is not repeated

if p in actual and p not in predicted[:i]:

num_hits += 1.0

score += num_hits / (i+1.0)

return score / min(len(actual), k)

def mapk(actual, predicted, k=10):

"""

Compute the mean average precision at k. Compute the mean average

prescision at k between two lists of lists of items.

Parameters

----------

actual : list

A list of lists of elements that are to be predicted (order doesn't

matter in the lists)

predicted : list

A list of lists of predicted elements (order matters in the lists)

k : int, optional

The maximum number of predicted elements

Returns

-------

score : double

The mean average precision at k over the input lists

"""

return np.mean([apk(a, p, k) for a, p in zip(actual, predicted)])

def mf_onek(mean_prec, mean_rec, beta=0.5):

"""Accept the mean precision, mean recall, and beta,

and return the mean F-measure.

Parameters

----------

mean_prec : float

Mean Precision

mean_rec : float

Mean Recall

beta : float, optional

Beta for the F-measure

Returns

-------

F : float

Mean F-measure based on the parameters

"""

first_factor = 1 + beta**2

second_factor = (mean_prec * mean_rec) / ((beta**2 * mean_prec) + mean_rec)

F = first_factor * second_factor

return F

def rprec(actual, predicted):

"""Compute the R-Precision of a query.

Parameters

----------

actual : array-like

List of actual values

predicted : array-like

List of predicted values

Returns

-------

float

R-Precision of a query

"""

rel = len(actual)

predicted = predicted[:rel]

sum_rel = 0

for predict in predicted:

if predict in actual:

sum_rel += 1

return sum_rel / rel

def ave_rprec(actual, predicted):

"""Compute the average R-Precision of several queries.

Parameters

----------

actual : array-like

List of list of actual values (per query)

predicted : array-like

List of list of predicted values (per query)

Returns

-------

float

R-Precision of a query

"""

return np.mean([rprec(a, p) for a, p in zip(actual, predicted)])

def ave_prg(lst_query, objects, dist, actual, lst_all_labels):

"""Draw PR curve

Parameters

----------

query : array-like

find objects similar to this query

objects : ndarray

database of objects to search in

dist : function

function that returns the distance of two input `ndarray`s

actual : int

class label

all_labels : array-like

label of each object in the database

Returns

-------

matplotlib.Axes

rendered PR curve

"""

lst_recalls = []

lst_precisions = []

for query, all_labels in zip(lst_query, lst_all_labels):

all_labels = np.asarray(all_labels)

results = nearest_k(query, objects, len(all_labels), dist)

rs = (all_labels[results] == actual).cumsum()

N = (all_labels == actual).sum()

precisions = rs / np.arange(1, len(rs) + 1)

recalls = rs / N

recalls = [0] + recalls.tolist()

precisions = [1] + precisions.tolist()

lst_recalls.append(recalls)

lst_precisions.append(precisions)

ys = []

x = np.linspace(0, 1, 11, endpoint=True)

for recall, precision in zip(lst_recalls, lst_precisions):

y = np.interp(x, recall, precision)

ys.append(y)

y = np.mean(ys, axis=0)

fig, ax = plt.subplots()

ax.set_xlim(0, 1)

ax.set_ylim(0, 1)

ax.set_xlabel("recall")

ax.set_ylabel("precision")

ax.set_title("Averaged Eleven-Point Precision/Recall Curve")

ax.plot(x, y, "--r")

ax.text(

0.65,

0.8,

"AUC={:0.2f}".format(trapz(y, x)),

fontsize=12,

)

return ax

def pretty_print(df):

"""Pretty print the dataframe

Parameters

----------

df : df

Data Frame

Returns

----------

Display Object

Pretty printed Data Frame

"""

return display(HTML(df.to_html().replace("\\n", "<br>")))

def recommend(name, k=5):

"""Print the Data Frame containing the k `relevant` movies.

Parameters

----------

name : str

Movie whose similar or `relevant` movies would be searched for

k : int, optional

Number of movies to return

"""

query_d = movies_default[movies_default["title_old"] == name].iloc[0]

query_d = query_d.drop("title_old")

results = nearest_k(query_d, movies_default.iloc[:, 1:].to_numpy(),

int(k), cosine)

pretty_print(

pd.DataFrame(movies["title"][results])

.reset_index()

.rename(columns={"title": "Movie"})

.drop("index", axis=1)

)

def search_k_title():

"""Display the drop-down menu widget and button widget

"""

lst_reco_k = list(range(1, 129))

reco_k = widgets.Dropdown(

options=lst_reco_k,

value=5,

description='k',

disabled=False,

)

reco_movie = widgets.Dropdown(

options=movies['title'].tolist(),

value='Afire',

description='Movie Title',

disabled=False,

)

display(reco_k)

display(reco_movie)

button = widgets.Button(description="Search", button_style='success')

output = widgets.Output()

display(button, output)

def on_button_clicked(b):

"""Run an event given a click input of the button

Parameters

----------

b : Button

Button

"""

with output:

clear_output(wait=True)

display(HTML(f'<p><b>Table 27.</b></p>'

f'<p><i>{reco_k.value} movies similar to '

f'{reco_movie.value}</i></p>'))

recommend(reco_movie.value, reco_k.value)

button.on_click(on_button_clicked)

Movies are a staple of entertainment for the modern day. From still images and slideshows to modern cinema and video streaming services, the quality, breadth, and the quantity of movies have been increasing at an exponential rate. Now, we are bombarded with so many movie choices that it is difficult to choose what to watch, or to even know what is available. Movie Recommenders are a necessary part of the movie provider business such as Netflix or Disney+ so they can let users find the movies that they want to see and spend more time on their platform.

This project takes a simplified approach to the same problem that all these providers have; if we have an idea of what kind of movie someone likes, what are the best other movies in our catalog that we can offer to them?

Movie recommendation system has revolutionized the way people discover and consume movies. According to Anish Gulati (2023), recommender systems can be classified as content-based and collaborative-filtering. Content-based filtering uses the similarities of movies to recommend new movies, while collaborative-filtering uses the user’s behavior and ratings to recommend new movies.

The dynamic nature of the entertainment industry, coupled with the vast and ever-growing library of movies, necessitates continuous innovation in recommendation techniques. According to Popflick, approximately 700 movies on average are released yearly. While content-based and collaborative-filtering methods have proven effective, the exploration of alternative approaches is crucial to further enhance the precision and personalization of movie recommendations.

In this context, our study is motivated by the recognition that Information Retrieval (IR) offers a promising avenue for improving movie recommendation systems. Information Retrieval, a field known for efficiently retrieving relevant information from large datasets, has demonstrated success in various domains. However, its application in the realm of movie recommendations remains an area of exploration, and its potential benefits are yet to be fully realized.

Data Source and Description

Our primary data source for this study is Rotten Tomatoes, a reputable online platform for movie and TV show reviews. We extracted details for our analysis from Rotten Tomatoes' list of Top 2023 Movies. This included scraping information such as movie synopsis, critic reviews, and genres.

In this study, we focused on a curated dataset comprising 128 movies. These selections were drawn from the Top 2023 Movies list available on Rotten Tomatoes' editorial page (https://editorial.rottentomatoes.com/guide/best-movies-of-2023/). For each movie, we collected pertinent details by scraping information from its respective Rotten Tomatoes page, exemplified by: https://www.rottentomatoes.com/m/the_first_slam_dunk.

To acquire all the details of the movies, the function get_all_movie_info is used.

%%capture

movies = get_all_movie_info();

The following were the gathered features or columns for the preliminary dataframe:

Table 1.

Columns of Preliminary DataFrame

| No. | Column | Description | Data Type |

|---|---|---|---|

| 1. | Title | title of the movie | string |

| 2. | Synopsis | synopsis of the movie, which would be converted to bag-of-words | string |

| 3. | Runtime | runtime of the movie, in minutes | int |

| 4. | Critic | the concatenated review for the movie of the different Top Critics of Rotten Tomatoes, which would be converted to bag-of-words | string |

| 5. | Genre | genre/s of the movies, which would soon be one-hot encoded with the prefix ‘g_’ (eg. g_drama, g_comedy) | string |

| 6. | Language | language of the film, which would soon be one-hot encoded with the prefix ‘lang_’ (eg. lang_english, lang_french) | string |

Data Assumptions and Limitations

For the purposes of the movie recommender system, the dataset would only include the 128 top-ranking movies in 2023 listed by Rotten Tomatoes in their article. Hence, the similar movies returned by the system would only be movies from 2023, and thus, it could be assumed that the distribution of the movie genres is not equally distributed. This means that some genre may be less represented in the dataset, and vice-versa, which may consequentially cause some queries to return few relevant results.

Still, the decision to limit our dataset to 128 movies was made to ensure practicality in measuring the effectiveness of our Information Retrieval (IR) system. This carefully selected subset allows for a focused and in-depth analysis, enhancing the precision and feasibility of our evaluation.

Database Creation

As a preliminary processing, the multi-class categorical features of the dataframe movies which are genre and language is then one-hot encoded using the function one_hot_encode.

movies = one_hot_encode(movies)

The collected data from each HTML file are then inserted into a database as a table named movie_encoded in the database entitled rotten_tomatoes.db. The team used the following code to do this:

# Convert the dataframe into a table inside a SQL database

movie_sql(movies)

movie_sql(movies)

Data Cleaning

First, the table movies_encoded from the rotten_tomatoes.db is loaded using the function load_sql. A preview of the table is shown below.

%%capture

movies = load_sql()

Table 2.

Preview of the DataFrame

movies.head(3)

| title | synopsis | runtime | critic | g_action | g_adventure | g_animation | g_anime | g_biography | g_comedy | ... | lang_french (canada) | lang_french (france) | lang_german | lang_italian | lang_japanese | lang_korean | lang_norwegian | lang_romanian | lang_spanish | lang_urdu | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | Afire | \n While vacationing by the... | 103 | The script, also by director Christian Petzold... | 0 | 0 | 0 | 0 | 0 | 1 | ... | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 1 | A Thousand and One | \n A THOUSAND AND ONE follo... | 117 | The director maps the contours of a destabilis... | 0 | 0 | 0 | 0 | 0 | 0 | ... | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 2 | A Million Miles Away | \n Inspired by the real-lif... | 121 | A Million Miles Away doesn’t exactly zoom past... | 0 | 0 | 0 | 0 | 1 | 0 | ... | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

3 rows × 45 columns

Second, given that the columns synopsis and critic are essentially documents whose words would need to be parsed one-by-one, they would be converted in bag-of-word format using the function bag_of_words which would preprocess them and convert them into bag-of-words afterwards. Two set of dataframes would be acquired from this process, as the team plans to compare using the default settings and the "optimal" settings for the vectorizer.

Third, the IDF of the tokens in the "optimal" dataframe would be acquired and ranked using the function sort_idf, and the tokens with IDF between 1 and 2 (exclusive) would be removed. Using the function bag_of_words, the optimal settings would be used again, while including the removed tokens in the stop words. The two dataframes (default and optimal) are then normalized to their TFIDF values and concatenated back to the main dataframe.

Fourth, given that the values for runtime are far from the values of the other columns and the ranges of the column are only from zero to one, it would be best to use MinMaxScaler as it would limit the range of column from zero to one. However, since the MinMaxScaler is very sensitive to outliers, it would be checked first whether there are several outliers in the dataset or not.

movies.boxplot('runtime', color='green',

flierprops=dict(markeredgecolor='red',

markerfacecolor='red'))

plt.title('Box-Plot of Runtime Column')

plt.ylabel('runtime')

plt.show()

Hence, given that the outliers are only 4 out of 128 and that they are relatively few, the team would push through with using the MinMaxScaler on the runtime column.

Data Exploration

In the dynamic and diverse world of cinema, Exploratory Data Analysis (EDA) serves as a crucial first step in understanding the underlying patterns and trends that shape the movie industry. Our analytical journey in 2023 begins with a thorough examination of the Rotten Tomatoes database, where each movie serves as a unique narrative entity contributing to the vast cinematic landscape. The EDA process in this context encompasses several key steps, each providing vital insights into different aspects of the movie data.

Runtime Distribution Analysis: We delve into the exploration of movie runtimes, a critical aspect that often influences audience preferences and perceptions. This involves plotting the distribution of runtimes, providing insights into common lengths and deviations.

Category Distribution Exploration: The analysis of movie categories unfolds the diversity in thematic content and narrative styles. This step includes a detailed look at the frequency and prevalence of various movie categories within our dataset.

Genre Distribution Analysis: Finally, we analyze the distribution of movie genres, an aspect that reflects the creative and artistic choices in film-making. This analysis not only highlights popular genres but also uncovers less explored ones, revealing the breadth and depth of cinematic expression in 2023.

Language Distribution Assessment: Movies are often defined by their language, an element that resonates cultural and regional nuances. We examine the distribution of movie languages, offering a glimpse into the linguistic diversity present in the 2023 movie lineup.

Through these EDA steps, we aim to build a comprehensive understanding of the movies released in 2023. This analysis not only aids in appreciating the current trends and preferences in the film industry but also sets the stage for more advanced analytical tasks, such as similarity assessments and predictive modeling, in our quest to explore the cinematic universe of 2023.

Runtime Distribution

warnings.filterwarnings("ignore", category=FutureWarning)

df = movies

bin_ranges = [0, 30, 60, 90, 120, 150, 180, 210, 240, 270, 300]

bin_labels = ['0-30', '31-60', '61-90', '91-120', '121-150',

'151-180', '181-210', '211-240', '241-270', '271-300']

df['runtime_bins'] = pd.cut(

df['runtime'], bins=bin_ranges, labels=bin_labels, right=False)

runtime_counts = df['runtime_bins'].value_counts().sort_index()

top_colors = ['#B74325' if 1 < i < 5 else '#FAE3A6'

for i in range(len(runtime_counts))]

plt.figure(figsize=(10, 6))

sns.barplot(x=runtime_counts.index, y=runtime_counts.values,

palette=top_colors)

plt.xticks(rotation=45)

plt.xlabel('Runtime (minutes)')

plt.ylabel('Number of Movies')

plt.title('Distribution of Movie Runtimes')

plt.show()

The analysis of the runtime distribution highlights a clear inclination towards feature-length films of approximately 1.5 to 2 hours, with a limited but notable acceptance of longer films up to about 2.5 hours. Extremely short and very long films appear to be outliers, indicating their specialized roles within the broader landscape of the film industry.

Dominance of Standard Feature-Length Films: A significant portion of the movies, particularly 86 out of the total, predominantly fall within the 91-120 minutes runtime range. This trend underscores a strong preference for standard feature-length films, which typically align with the audience's attention span and narrative development needs.

Niche Presence of Short and Extended Films: The dataset reveals a minimal presence of extremely short (0-30 minutes, with only 2 films) and notably long films (151-180 minutes with 4 films, and 181-210 minutes with 1 film). This suggests that films deviating significantly from the standard duration are less common, catering to niche audiences or specific cinematic purposes.

Moderate Acceptance of Longer Narratives: Films within the 121-150 minutes range, totaling 19, exhibit a moderate level of prevalence. This could reflect the industry's inclination towards more elaborate storytelling, potentially in genres that demand detailed plot development.

Absence of Ultra-Long Films: The dataset shows no films exceeding 210 minutes, indicating the rarity of ultra-long cinematic experiences in mainstream cinema. Such lengths are likely reserved for unique or specialized film genres.

Clear Demarcation Between Film Categories: The complete absence of films in the 31-60 minutes range points to a distinct separation between short films and feature-length films. This gap aligns with established industry norms and audience expectations regarding the categorization of film lengths.

Movie Category Analysis

warnings.filterwarnings("ignore", category=FutureWarning)

cat = movies.loc[:, 'g_action':'g_western']

cat.columns = [re.sub(r'g_', '', i) for i in cat.columns]

lst_categories = []

for index, row in cat.iterrows():

category = []

for column in cat.columns:

if row[column] == 1:

category.append(column)

categories = ', '.join(category)

lst_categories.append(categories)

cat['categories'] = lst_categories

category_counts = cat['categories'].value_counts()

top_colors = ['#B74325' if i < 5 else '#FAE3A6'

for i in range(len(category_counts))]

plt.figure(figsize=(10, 15))

sns.barplot(y=category_counts.index, x=category_counts.values,

palette=top_colors, orient='h')

plt.xticks(rotation=0, ha='right')

plt.xlabel('Number of Movies')

plt.ylabel('Categories')

plt.title('Distribution of Movie Categories (Top 10)')

plt.tight_layout()

plt.show()

The category distribution in the dataset demonstrates a rich tapestry of cinematic genres, with a strong inclination towards drama, hybrid genres, and suspenseful narratives. The presence of diverse genre combinations suggests an industry that is continually evolving and experimenting, aiming to cater to a wide array of audience preferences and tastes.¶

Predominance of Drama: The single most prevalent category is 'drama,' with 24 films, highlighting its enduring appeal. Drama, known for its depth and emotional complexity, remains a cornerstone genre in the film industry.

Hybrid Genres Gaining Traction: There is a notable presence of hybrid genres, such as 'drama, mystery&thriller' and 'comedy, drama', each with 9 films. This trend signifies a growing interest in films that blend elements from multiple genres, offering more nuanced and multifaceted cinematic experiences.

Horror and Mystery Fusion: The combination of 'horror' with 'mystery&thriller' appears frequently, with 7 films falling into this combined category. Additionally, there are 4 films that blend 'horror, mystery&thriller, sci-fi', suggesting a strong audience interest in suspenseful and speculative narratives.

Diverse Combinations with Comedy: 'Comedy' appears in various combinations, reflecting its versatility as a genre. It is seen paired with 'drama', 'horror', and even 'sci-fi', indicating a tendency to infuse humor into various narrative contexts.

LGBTQ+ Representation: Films categorizing under 'drama, lgbtq+, romance' and similar combinations, although fewer in number, show an inclusion of LGBTQ+ themes in mainstream cinema. This represents a progressive move towards more diverse and representative storytelling.

Action and Mystery: 'Action, mystery&thriller' is another popular combination, with 2 films. This blend suggests a continued audience interest in high-adrenaline, suspense-filled narratives.

Unique One-Off Combinations: Several categories appear only once, such as 'biography, drama, history, music' and 'action, war'. These unique combinations indicate experimentation and niche filmmaking within the industry.

Animation and Family Films: There is also a presence of 'animation' and 'kids&family' genres, sometimes combined with 'comedy' and 'adventure', highlighting the industry's focus on family-friendly entertainment.

Movie Genre Breakdown

genres = movies.loc[:, 'g_action':'g_western']

genres.columns = [re.sub(r'g_', '', i) for i in genres.columns]

genres = genres.sum(axis=0)

genres = genres.sort_values(ascending=False)

top_colors = ['#B74325' if i < 5 else '#FAE3A6'

for i in range(len(genres))]

plt.figure(figsize=(10, 6))

sns.barplot(y=genres.index, x=genres.values,

palette=top_colors, orient="h")

plt.xticks(rotation=0)

plt.xlabel("Number of Movies")

plt.ylabel("Genre")

plt.title("Distribution of Movie Genres")

plt.show()

The genre distribution in the dataset, where each genre is treated as a unique category without combinations, reflects a diverse cinematic palette, with drama and comedy at the forefront, followed by a strong interest in mystery, thriller, and horror. The presence of a wide array of other genres, though less frequent, underscores the industry's capacity to cater to varied and specific audience tastes and preferences.¶

Dominance of Drama: With 80 films categorized under 'g_drama', this genre emerges as the most predominant. Drama's preeminence underscores its universal appeal and versatility in storytelling, capable of encompassing a wide range of human experiences and emotions.

Strong Presence of Comedy: 'g_comedy', with 45 films, holds a significant position, reflecting the audience's consistent preference for humor and light-hearted entertainment. Comedy's ability to appeal to a broad audience base makes it a perennial favorite.

Popularity of Mystery and Thriller: The 'g_mystery&thriller' genre, with 39 films, highlights the audience's inclination towards suspense, intrigue, and excitement. This genre's popularity suggests a strong demand for narratives that provide a sense of suspense and engagement.

Horror as a Staple Genre: With 21 films, 'g_horror' maintains its status as a staple genre. The enduring appeal of horror speaks to the audience's fascination with fear, the supernatural, and the unknown.

Romance Continues to Charm: 'g_romance', represented by 16 films, continues to charm audiences, showcasing the timeless appeal of love stories and romantic narratives.

Action and Sci-Fi: 'g_action' and 'g_sci-fi' genres, with 13 and 11 films respectively, reflect the audience's interest in high-energy, visually impactful, and often technologically driven stories.

Adventure and Diversity: 'g_adventure' and 'g_lgbtq+', each with 10 films, indicate a desire for escapism and exploration, as well as a growing recognition of diverse narratives within the mainstream.

Fantasy and Beyond: 'g_fantasy', with 8 films, along with other genres like 'g_biography', 'g_history', and 'g_animation', each with a handful of films, suggest niche but dedicated audience interests in imaginative worlds, real-life stories, historical narratives, and animated features.

Lesser-Represented Genres: Genres like 'g_crime', 'g_music', 'g_kids&family', and 'g_anime' appear less frequently, indicating more specialized audience segments. Similarly, 'g_holiday', 'g_short', 'g_sports', 'g_musical', 'g_war', and 'g_western', each with 1 or 2 films, represent highly niche categories within the cinematic landscape.

Movie Language Diversity

lang = movies.loc[:, 'lang_arabic':'lang_urdu']

lang.columns = [re.sub(r'lang_', '', i) for i in lang.columns]

lang = lang.sum(axis=0)

lang = lang.sort_values(ascending=False)

top_colors = ['#B74325' if i < 5 else '#FAE3A6'

for i in range(len(lang))]

plt.figure(figsize=(10, 6))

sns.barplot(y=lang.index, x=lang.values,

palette=top_colors, orient="h")

plt.xticks(rotation=0)

plt.xlabel("Number of Movies")

plt.ylabel("Languages")

plt.title("Distribution of Movie Languages")

plt.show()

The language distribution analysis reveals a strong skew towards English, underscoring its predominant role in the global movie industry. However, the presence of a variety of other languages highlights the rich diversity and multicultural nature of contemporary cinema. This linguistic variety not only represents different cultural perspectives but also showcases the expansive reach and inclusive nature of the film industry, catering to a global audience with varied linguistic backgrounds.¶

Overwhelming Dominance of English: With 89 films, 'English' is the overwhelmingly dominant language, underscoring the global influence and reach of English-language cinema. This prevalence reflects both the large English-speaking audience worldwide and the significant output of major film industries like Hollywood.

Significant Presence of French Cinema: 'French (France)' ranks second with 13 films, indicating a strong representation of French cinema. This points to the cultural richness and global appeal of French-language films, known for their artistic and narrative depth.

Diversity in European and Asian Languages: Languages like 'Spanish', 'Japanese', 'Italian', 'Arabic', and 'Danish', each with a few films, highlight the diversity of the dataset. These languages represent significant national cinemas that contribute richly to the global tapestry of film.

English Variants and Regional Films: The presence of 'English (United Kingdom)' with 3 films and other regional variants like 'French (Canada)' with 1 film, illustrates the linguistic nuances and regional diversity within major languages.

Representation of Lesser-Known Languages: Languages such as 'Korean', 'Catalan', 'Finnish', 'Romanian', 'Urdu', 'Filipino', 'Norwegian', and 'German', each with 1 film, though less prevalent, are indicative of the wide range of global cinema. Their inclusion reflects the industry's breadth and the increasing recognition of diverse linguistic narratives.

Methodology Overview

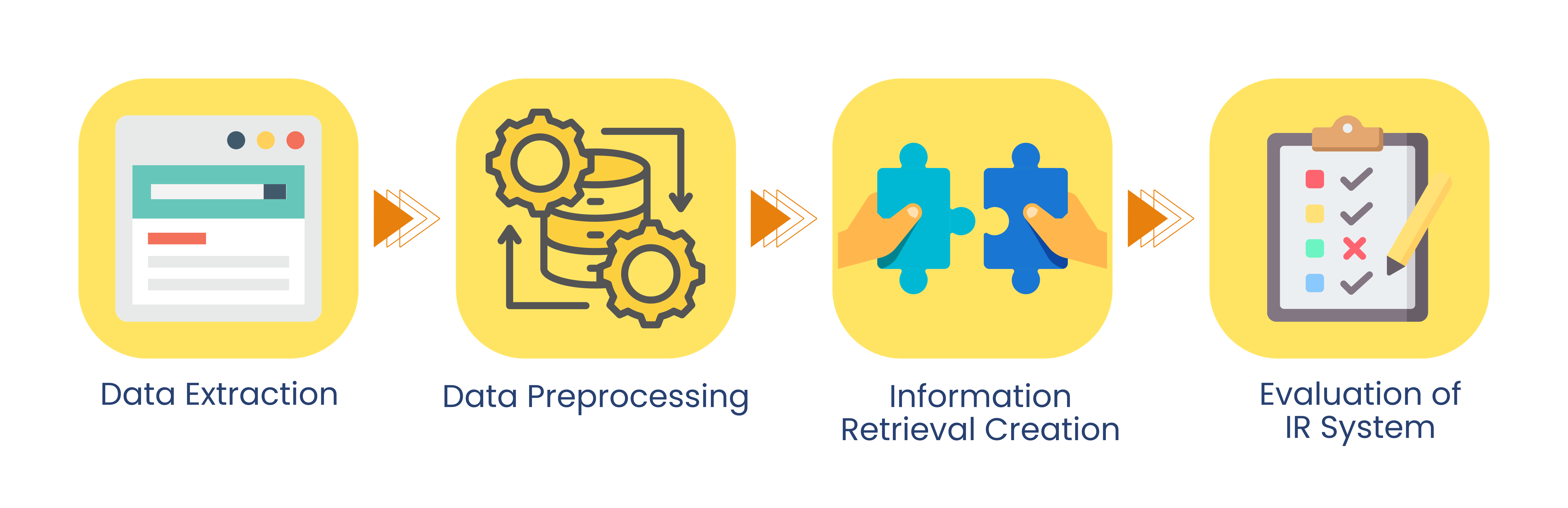

In creating the Information Retrieval System, the following were done:

Table 3.

Methodology Framework

| Step No. | Step | Description |

|---|---|---|

| 1. | Data Extraction | The team scraped data from the Rotten Tomatoes' website and complied these data into a dataframe which was then saved into a database. |

| 2. | Data Preprocessing | The team preprocessed the data through one-hot encoding, stemming, common word-normalization, lowercasing of each character, and other relevant preprocessing techniques. |

| 3. | Information Retrieval Creation | The team tested several distance measures for the system, where the distance measure which would provide the highest IR metric would be used as the final measure. |

| 4. | Evaluation of IR System | The team evaluated the created IR systems using average (precision @ k), average (recall @k), mean average precision, area under the Averaged Eleven-Point PR curve, and such which would then be discussed afterwards. |

Data Extraction

With web scraping, Requests, and BeautifulSoup consolidated in the function get_all_movie_info, the team was able to extract movie details from the Rotten Tomatoes movie pages.

As said beforehand, the categorical columns, genre and language, were one-hot encoded. This preprocessing is done for easier conversion to SQLite table. In the end, the team have all the details for each movie, detailed in Table 1.

These collected data was placed in a dataframe, and then turned into an SQLite database for exploration and processing through the function movie_sql.

Data Preprocessing

As said beforehand, the table movies_encoded from the rotten_tomatoes.db is loaded using the function load_sql. A preview is shown in Table 2.

%%capture

movies = load_sql()

In pre-processing the data, given that there are columns which are essentially documents or "collection" of strings which are synopsis and critic, these columns would be converted into bag-of-words through the function bag_of_words. In doing so, a fitted vectorizer would also be made available. To test the difference in performance between default parameters and "optimally" set parameters, the team would be making two dataframes named movie_default and movie_optimal.

In setting the parameters for movie_optimal, a preprocesser function named preprocesser was defined, wherein it places all the documents into lowercase, removes special characters, normalizes certain common words such as in, the, and the like, and stems the words through the English Snowball stemmer. The rationale for stemming the words is to allow the Information Retrieval System to "recognize" words which are diffent in spelling yet similar in meaning; these includes conjugated words such as explored and exploring. Furthermore, an ngram_range of (1,2) was set in order to capture meaningful two-word phrases which may help in finding similar movies or differentiating them from one another.

Moreover, common stop words acquired from Ganesan (n.d.) were used as stop words, and these include common words such as another, certain, and such. This is to remove noise from the system and to avoid associating dissimilar movies, by virtue of their similarities in numbers of these stop words. Lastly, to make sure that extremely rare and extremely common words are removed from the system, a max_df of 0.9 is set and a min_df of 0.1 is set as well. This would remove words which are present in more than 90% and in less than 10% of the documents.

warnings.filterwarnings('ignore')

# Movies (Default Mode)

vectorizer_default_synopsis, movie_default_synopsis = bag_of_words(

movies['synopsis'], mode='default')

movie_default_synopsis = pd.DataFrame.sparse.from_spmatrix(

movie_default_synopsis,

columns=vectorizer_default_synopsis.get_feature_names_out(),

)

vectorizer_default_critic, movie_default_critic = bag_of_words(

movies['critic'], mode='default')

movie_default_critic = pd.DataFrame.sparse.from_spmatrix(

movie_default_critic,

columns=vectorizer_default_critic.get_feature_names_out(),

)

# Movies (Optimal Mode)

vectorizer_optimal_synopsis, movie_optimal_synopsis = bag_of_words(

movies['synopsis'], mode='optimal')

movie_optimal_synopsis = pd.DataFrame.sparse.from_spmatrix(

movie_optimal_synopsis,

columns=vectorizer_optimal_synopsis.get_feature_names_out(),

)

vectorizer_optimal_critic, movie_optimal_critic = bag_of_words(

movies['critic'], mode='optimal')

movie_optimal_critic = pd.DataFrame.sparse.from_spmatrix(

movie_optimal_critic,

columns=vectorizer_optimal_critic.get_feature_names_out(),

)

The following are a preview of each table.

Table 4.

Movies Synopsis (Default Settings)

movie_default_synopsis.head(3)

| 11 | 12 | 12th | 15 | 16 | 17 | 18th | 1945 | 1960s | 1976 | ... | younger | youngest | your | yourself | youssef | youth | zadi | zakhar | zem | zero | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ... | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ... | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ... | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

3 rows × 3291 columns

Table 5.

Movies Critic (Default Settings)

movie_default_critic.head(3)

| 00s | 10 | 104 | 10s | 16mm | 18th | 1970 | 1980s | 1992 | 1998 | ... | zaniness | zany | zee | zero | zion | zip | zlotowski | zone | zoom | zootropolis | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ... | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ... | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ... | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 |

3 rows × 4692 columns

Table 6.

Movies Synopsis (Optimal Settings)

movie_optimal_synopsis.head(3)

| _connector_ world | are | back | be | becom | begin | best | can | discov | famili | ... | them | they | time | two | when | who | world | year | year old | young | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | ... | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 1 | 0 | 1 |

| 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ... | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 1 | 1 | 0 |

| 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | ... | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

3 rows × 48 columns

Table 7.

Movies Critic (Optimal Settings)

movie_optimal_critic.head(3)

| _connector_ _connector_ | _connector_ end | _connector_ film | _connector_ it | _connector_ most | _connector_ movi | _connector_ spanish | _connector_ way | action | actor | ... | which | while | who | will | work | world | writer | year | you | your | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 1 | ... | 0 | 1 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 |

| 1 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ... | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ... | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

3 rows × 156 columns

To further optimize Table 5, the IDFs of the vectorized critic and synopsis would be calculated, and from this, the tokens with the lowest IDFs would be removed, as these tokens would serve as the "domain-specific" common words, wherein the domain in this study would be on films.

# Synopsis Optimal

vectorizer_optimal_synopsis, movie_optimal_synopsis = bag_of_words(

movies['synopsis'], mode='optimal')

idf_movie_synopsis = sort_idf(movie_optimal_synopsis,

vectorizer_optimal_synopsis)

# Critic Optimal

vectorizer_optimal_critic, movie_optimal_critic = bag_of_words(

movies['critic'], mode='optimal')

idf_movie_critic = sort_idf(movie_optimal_critic,

vectorizer_optimal_critic)

The following are the tables showing the top 5 words with the least IDFs. What would be done then with the "domain-specific" common words which are at the range from 1 to 2 (exclusive) within this top 5 list would be to remove them as they serve as noise and do not help in differentiating the movies at all.

Table 8.

Movies_Optimal Synopsis IDFs

idf_movie_synopsis.head(5)

| idf_weights | |

|---|---|

| is | 1.655120 |

| when | 1.889520 |

| who | 2.276293 |

| she | 2.276293 |

| life | 2.394077 |

Table 9.

Movies_Optimal Critic IDFs

idf_movie_critic.head(5)

| idf_weights | |

|---|---|

| film | 1.244692 |

| movi | 1.625706 |

| be | 1.732678 |

| what | 1.834461 |

| more | 1.834461 |

Having seen that there are tokens which have very low IDFs, using the same "optimal" parameters, both columns synopsis and critic would be converted to the bag-of-words again, but preprocessed with the new "domain-specific" stop words, alongside the original set of stop words.

# Optimal Synopsis

vectorizer_optimal_synopsis, movie_optimal_synopsis = bag_of_words(

movies['synopsis'],

mode='optimal',

stop_extend=list(idf_movie_synopsis.head(2).index)

)

movie_optimal_synopsis = pd.DataFrame.sparse.from_spmatrix(

movie_optimal_synopsis,

columns=vectorizer_optimal_synopsis.get_feature_names_out(),

)

# Optimal Critic

vectorizer_optimal_critic, movie_optimal_critic = bag_of_words(

movies['critic'],

mode='optimal',

stop_extend=list(idf_movie_critic.head(5).index)

)

movie_optimal_critic = pd.DataFrame.sparse.from_spmatrix(

movie_optimal_critic,

columns=vectorizer_optimal_critic.get_feature_names_out(),

)

Table 10.

Movies Synopsis (Optimal Settings; Additional Stop)

movie_optimal_synopsis.head(3)

| _connector_ world | are | back | be | becom | begin | best | can | discov | famili | ... | take | them | they | time | two | who | world | year | year old | young | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | ... | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 1 |

| 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ... | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 1 | 1 | 0 |

| 2 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | ... | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

3 rows × 46 columns

Table 11.

Movies Critic (Optimal Settings; Additional Stop)

movie_optimal_critic.head(3)

| _connector_ _connector_ | _connector_ end | _connector_ is | _connector_ it | _connector_ most | _connector_ spanish | _connector_ way | action | actor | also | ... | which | while | who | will | work | world | writer | year | you | your | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 1 | 1 | ... | 0 | 1 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 |

| 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ... | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 2 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | ... | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

3 rows × 148 columns

The four tables (default and optimal) would then be normalized as TFIDF values. This would be done through the function normalize_tfidf.

# Movies (Default Mode)

vectorizer_default_synopsis, movie_default_synopsis = bag_of_words(

movies['synopsis'], mode='default')

vectorizer_default_critic, movie_default_critic = bag_of_words(

movies['critic'], mode='default')

vectorizer_def_sys_tfidf, movie_default_synopsis = normalize_tfidf(

movie_default_synopsis)

vectorizer_def_crit_tfidf, movie_default_critic = normalize_tfidf(

movie_default_critic)

movie_default_synopsis = pd.DataFrame.sparse.from_spmatrix(

movie_default_synopsis,

columns=vectorizer_default_synopsis.get_feature_names_out(),

)

movie_default_critic = pd.DataFrame.sparse.from_spmatrix(

movie_default_critic,

columns=vectorizer_default_critic.get_feature_names_out(),

)

# Movies (Optimal Mode)

vectorizer_optimal_synopsis, movie_optimal_synopsis = bag_of_words(

movies['synopsis'],

mode='optimal',

stop_extend=list(idf_movie_synopsis.head(2).index)

)

vectorizer_optimal_critic, movie_optimal_critic = bag_of_words(

movies['critic'],

mode='optimal',

stop_extend=list(idf_movie_critic.head(5).index)

)

vectorizer_opt_sys_tfidf, movie_optimal_synopsis = normalize_tfidf(

movie_optimal_synopsis)

vectorizer_opt_crit_tfidf, movie_optimal_critic = normalize_tfidf(

movie_optimal_critic)

movie_optimal_synopsis = pd.DataFrame.sparse.from_spmatrix(

movie_optimal_synopsis,

columns=vectorizer_optimal_synopsis.get_feature_names_out(),

)

movie_optimal_critic = pd.DataFrame.sparse.from_spmatrix(

movie_optimal_critic,

columns=vectorizer_optimal_critic.get_feature_names_out(),

)

The four tables are previewed below:

Table 12.

Movies Synopsis (Default Settings; TFIDF Normalized)

movie_default_synopsis.head(5)

| 11 | 12 | 12th | 15 | 16 | 17 | 18th | 1945 | 1960s | 1976 | ... | younger | youngest | your | yourself | youssef | youth | zadi | zakhar | zem | zero | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | ... | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 |

| 1 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | ... | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 |

| 2 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | ... | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 |

| 3 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | ... | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 |

| 4 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | ... | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 |

5 rows × 3291 columns

Table 13.

Movies Critic (Default Settings; TFIDF Normalized)

movie_default_critic.head(5)

| 00s | 10 | 104 | 10s | 16mm | 18th | 1970 | 1980s | 1992 | 1998 | ... | zaniness | zany | zee | zero | zion | zip | zlotowski | zone | zoom | zootropolis | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | ... | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.000000 | 0.0 |

| 1 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | ... | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.000000 | 0.0 |

| 2 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | ... | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.127834 | 0.0 |

| 3 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | ... | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.000000 | 0.0 |

| 4 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | ... | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.000000 | 0.0 |

5 rows × 4692 columns

Table 14.

Movies Synopsis (Optimal Settings; TFIDF Normalized)

movie_optimal_synopsis.head(5)

| _connector_ world | are | back | be | becom | begin | best | can | discov | famili | ... | take | them | they | time | two | who | world | year | year old | young | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 0.0 | 0.301699 | 0.000000 | 0.000000 | 0.0 | 0.000000 | 0.0 | 0.0 | 0.0 | 0.301699 | ... | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.000000 | 0.0 | 0.289036 | 0.000000 | 0.306247 |

| 1 | 0.0 | 0.000000 | 0.000000 | 0.000000 | 0.0 | 0.000000 | 0.0 | 0.0 | 0.0 | 0.000000 | ... | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.243383 | 0.0 | 0.266502 | 0.323606 | 0.000000 |

| 2 | 0.0 | 0.000000 | 0.000000 | 0.000000 | 0.0 | 0.000000 | 0.0 | 0.0 | 0.0 | 0.340635 | ... | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.000000 | 0.0 | 0.000000 | 0.000000 | 0.000000 |

| 3 | 0.0 | 0.000000 | 0.245681 | 0.440677 | 0.0 | 0.240856 | 0.0 | 0.0 | 0.0 | 0.000000 | ... | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.181147 | 0.0 | 0.000000 | 0.000000 | 0.210165 |

| 4 | 0.0 | 0.000000 | 0.000000 | 0.000000 | 0.0 | 0.000000 | 0.0 | 0.0 | 0.0 | 0.000000 | ... | 0.0 | 0.0 | 0.0 | 0.0 | 1.0 | 0.000000 | 0.0 | 0.000000 | 0.000000 | 0.000000 |

5 rows × 46 columns

Table 15.

Movies Critic (Optimal Settings; TFIDF Normalized)

movie_optimal_critic.head(5)

| _connector_ _connector_ | _connector_ end | _connector_ is | _connector_ it | _connector_ most | _connector_ spanish | _connector_ way | action | actor | also | ... | which | while | who | will | work | world | writer | year | you | your | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | 0.0 | 0.0 | 0.000000 | 0.0 | 0.000000 | 0.189491 | 0.0 | 0.0 | 0.197687 | 0.167216 | ... | 0.000000 | 0.175795 | 0.000000 | 0.0 | 0.146946 | 0.0 | 0.0 | 0.0 | 0.000000 | 0.0 |

| 1 | 0.0 | 0.0 | 0.000000 | 0.0 | 0.000000 | 0.000000 | 0.0 | 0.0 | 0.000000 | 0.000000 | ... | 0.000000 | 0.000000 | 0.151074 | 0.0 | 0.000000 | 0.0 | 0.0 | 0.0 | 0.000000 | 0.0 |

| 2 | 0.0 | 0.0 | 0.190172 | 0.0 | 0.000000 | 0.000000 | 0.0 | 0.0 | 0.000000 | 0.000000 | ... | 0.000000 | 0.000000 | 0.000000 | 0.0 | 0.000000 | 0.0 | 0.0 | 0.0 | 0.000000 | 0.0 |

| 3 | 0.0 | 0.0 | 0.000000 | 0.0 | 0.000000 | 0.000000 | 0.0 | 0.0 | 0.000000 | 0.000000 | ... | 0.000000 | 0.000000 | 0.145567 | 0.0 | 0.000000 | 0.0 | 0.0 | 0.0 | 0.000000 | 0.0 |

| 4 | 0.0 | 0.0 | 0.000000 | 0.0 | 0.161545 | 0.154848 | 0.0 | 0.0 | 0.161545 | 0.000000 | ... | 0.132463 | 0.000000 | 0.125019 | 0.0 | 0.000000 | 0.0 | 0.0 | 0.0 | 0.095729 | 0.0 |

5 rows × 148 columns

These default and optimal dataframes are then concatenated separately to the main dataframe movies, resulting to two dataframes movies_default and movies_optimal. As the next step is normalization of the column runtime, given that runtime is a buzzword in the film domain and it was seen that there is indeed a token named runtime in the bag-of-word dataframes, the original runtime column name would be changed to runtime_old. Moreover, given that title would be used and there is a duplicate column because of the bag-of-word step, the column name for title is changed to title_old.

concat_movies = movies.rename(columns={'runtime': 'runtime_old',

'title': 'title_old'})

# Movies (Default)

movies_default = concat_movies.drop(['critic', 'synopsis'], axis=1)

movies_default = pd.concat([movies_default, movie_default_synopsis,

movie_default_critic], axis=1)

# Movies (Optimal)

movies_optimal = concat_movies.drop(['critic', 'synopsis'], axis=1)

movies_optimal = pd.concat([movies_optimal, movie_optimal_synopsis,

movie_optimal_critic], axis=1)

Lastly, the column runtime in both dataframes will be scaled with MinMaxScaler in order to scale the values in this column to the range from zero to one, the range in the one-hot encoded columns and in the TFIDF normalized columns.

scaler_default = MinMaxScaler()

scaler_optimal = MinMaxScaler()

# Movies (Default)

runtime_default = scaler_default.fit_transform(

movies_default[['runtime_old']])

runtime_default = pd.DataFrame(runtime_default, columns=['runtime_old'])

movies_default.drop('runtime_old', axis=1, inplace=True)

movies_default = pd.concat([movies_default, runtime_default], axis=1)

# Movies (Optimal)

runtime_optimal = scaler_optimal.fit_transform(

movies_optimal[['runtime_old']])

runtime_optimal = pd.DataFrame(runtime_optimal, columns=['runtime_old'])

movies_optimal.drop('runtime_old', axis=1, inplace=True)

movies_optimal = pd.concat([movies_optimal, runtime_optimal], axis=1)

The final tables which would be used in the Information Retrieval part are presented below:

Table 16.

Movies Default (Final)

movies_default.head(3)

| title_old | g_action | g_adventure | g_animation | g_anime | g_biography | g_comedy | g_crime | g_drama | g_fantasy | ... | zany | zee | zero | zion | zip | zlotowski | zone | zoom | zootropolis | runtime_old | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | Afire | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 1 | 0 | ... | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.000000 | 0.0 | 0.572222 |

| 1 | A Thousand and One | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | ... | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.000000 | 0.0 | 0.650000 |

| 2 | A Million Miles Away | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 1 | 0 | ... | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.127834 | 0.0 | 0.672222 |

3 rows × 8026 columns

Table 17.

Movies Optimal (Final)

movies_optimal.head(3)

| title_old | g_action | g_adventure | g_animation | g_anime | g_biography | g_comedy | g_crime | g_drama | g_fantasy | ... | while | who | will | work | world | writer | year | you | your | runtime_old | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | Afire | 0 | 0 | 0 | 0 | 0 | 1 | 0 | 1 | 0 | ... | 0.175795 | 0.000000 | 0.0 | 0.146946 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.572222 |

| 1 | A Thousand and One | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 1 | 0 | ... | 0.000000 | 0.151074 | 0.0 | 0.000000 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.650000 |

| 2 | A Million Miles Away | 0 | 0 | 0 | 0 | 1 | 0 | 0 | 1 | 0 | ... | 0.000000 | 0.000000 | 0.0 | 0.000000 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.672222 |

3 rows × 237 columns

Information Retrieval Creation

For the information retrieval system, the following distance metrics would be used:

- Euclidian Distance (l2 norm)

- Manhattan Distance (l1 norm)

- Cosine Distance

In order to get all the distance matrices for both dataframes, the function nearest_k would be used. The results from each call would then be used for the evaluation part.

Evaluation of IR System

In evaluating the IR System, the following genres from Rotten Tomatoes and their corresponding movies were used as the queries. This is in order to approximate a representation of querying each type of movie in evaluating the performance of the IR system.

Table 18.

Genres and Corresponding Movies (Evaluation)

| No. | Genre | Movie |

|---|---|---|

| 1. | Drama | Oppenheimer |

| 2. | Comedy | Sitting in Bars with Cake |

| 3. | Mystery and Thriller | M3GAN |

| 4. | Horror | The Blackening |

| 5. | Romance | Red, White & Royal Blue |

| 6. | Action | John Wick: Chapter 4 |

| 7. | Sci-Fi | Biosphere |

| 8. | Adventure | Guardians of the Galaxy Vol. 3 |

| 9. | LGBTQA+ | Shortcomings |

| 10. | Fantasy | Suzume |

As a preface for the validation data or gold standard, the team assumed the roles of domain experts in validating whether a film is relevant to a given film or not. The relevant films were determined by comparing the genre, summary, and trailers of one film vs. the other, where similar genres and storyline are determined relevant to each other. Moreover, instead of having only one team member validate the relevance of a film to another, another team member also did their own validation. In the case of conflict between the judgment of one team member over the other, one team member served as arbiter in deciding whether the said film is indeed relevant or not. This validation data or gold standard, which is opened as a dataframe, is cleaned minimally, wherein null values are turned to into zeroes, twos (indicating relevance) or hundreds (indicating exact similarity to the film in question) are changed to ones, and the dtype is changed to int. Moreover, given a difference in the order of the titles, the titles were sorted in accordance with the order in the original movies dataframe.

Table 19.

Preview of Validation Data

validation_data = pd.read_csv('validation_data.csv')

validation_data.fillna(0, inplace=True)

validation_data_temp = validation_data.iloc[:, 1:].astype(int)

validation_data = pd.concat([validation_data.iloc[:, 0],

validation_data_temp],

axis=1)

validation_data = validation_data.map(

lambda x: 1 if x == 2 or x == 100 else x)

movies.set_index('title', inplace=True)

validation_data.set_index('title', inplace=True)

validation_data = validation_data.reindex(movies.index)

movies.reset_index(inplace=True)

validation_data.reset_index(inplace=True)

validation_data.head(3)

| title | Oppenheimer | Sitting in Bars with Cake | M3GAN | The Blackening | Red, White & Royal Blue | John Wick: Chapter 4 | Biosphere | Guardians of the Galaxy Vol. 3 | Shortcomings | Suzume | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | Afire | 0 | 1 | 0 | 0 | 1 | 0 | 0 | 0 | 0 | 0 |

| 1 | A Thousand and One | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

| 2 | A Million Miles Away | 1 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 | 0 |

The kappa statistic, a measure of how much the judges agree on relevance, whether they agree all of the time, most of the time, by chance or by "worse than random" (possibly hinting a negative bias), would be calculated for all combinations of the three judges to provide a tight rationale on why the created gold standard should be used (Cambridge UP, 2008).

The method for acquiring the kappa statistic is as follows (Cambridge UP, 2008):

The $P(A)$ or the observed proportion of the times the judges agreed would be first calculated and this is calculated by:

$$P(A) = P(Yes \space and \space Yes) + P(No \space and \space No)$$

Second, the pooled marginals are acquired and calculated as follows:

$$P(nonrelevant) = \frac{Total \space No \space Votes}{Total \space Votes}$$

$$P(relevant) = \frac{Total \space Yes \space Votes}{Total \space Votes}$$

Using these pooled marginals, $P(E)$ or the probability that the judges agreed by chance is calculated where it is defined as:

$$P(E) = P(nonrelevant)^{2} + P(relevant)^{2}$$

Lastly, the kappa statistic is calculated using these values:

$$\kappa = \frac{(P(A) - P(E))}{(1- P(E))}$$

Shown below are the relevance matrix for each pair of judge and a table containing all their kappa statistics and the average pairwise kappa statistic, created from the Data Frames from the csv files for each judge (judge_1.csv, judge_2.csv, and validation_data.csv) using the function kappa where alongside the calculation, null values are changed to zeroes and non-null values are changed to ones. Moreover, the title columns in all data frames are removed. The average pairwise kappa statistic is then calculated and evaluated to show whether the gold standard is reliable or not.

Table 20.

Relevance Matrix (Judge 1 vs. Judge 2)

judge_1 = pd.read_csv('judge_1.csv')

judge_1.drop('title', axis=1)

judge_2 = pd.read_csv('judge_2.csv')

judge_2.drop('title', axis=1)

judge_3 = pd.read_csv('validation_data.csv')

judge_3.drop('title', axis=1)

kappa_12, rel_mat12 = kappa(judge_1, judge_2, 'Judge 1', 'Judge 2')

rel_mat12

| Judge 2 | ||||

|---|---|---|---|---|

| Yes | No | Total | ||

| Judge 1 | Yes | 151 | 27 | 178 |

| No | 45 | 1185 | 1230 | |

| Total | 196 | 1212 | 1408 | |

Table 21.

Relevance Matrix (Judge 2 vs. Judge 3)

kappa_23, rel_mat23 = kappa(judge_2, judge_3, 'Judge 2', 'Judge 3')

rel_mat23

| Judge 3 | ||||

|---|---|---|---|---|

| Yes | No | Total | ||

| Judge 2 | Yes | 166 | 30 | 196 |

| No | 11 | 1201 | 1212 | |

| Total | 177 | 1231 | 1408 | |

Table 22.

Relevance Matrix (Judge 1 vs. Judge 3)

kappa_13, rel_mat13 = kappa(judge_1, judge_3, 'Judge 1', 'Judge 3')

rel_mat13

| Judge 3 | ||||

|---|---|---|---|---|

| Yes | No | Total | ||

| Judge 1 | Yes | 162 | 16 | 178 |

| No | 15 | 1215 | 1230 | |

| Total | 177 | 1231 | 1408 | |

Table 23.

Kappa Statistics

pd.DataFrame(

{

"Judge 1 vs. 2": "{:0.2f}".format(kappa_12),

"Judge 2 vs. 3": "{:0.2f}".format(kappa_23),

"Judge 1 vs. 3": "{:0.2f}".format(kappa_13),

"Average Pairwise": "{:0.2f}".format(np.mean([kappa_12, kappa_23,

kappa_13])),

},

index=["Kappa Statistics"],

)

| Judge 1 vs. 2 | Judge 2 vs. 3 | Judge 1 vs. 3 | Average Pairwise | |

|---|---|---|---|---|

| Kappa Statistics | 0.78 | 0.87 | 0.90 | 0.85 |

Given that Cambridge UP (2008) states that values above 0.8 is taken as "good agreement", values between 0.67 and 0.8 is taken as "fair agreement", and values below 0.67 would consider the created standard as a dubious basis for the evaluation and given that the calculated average pairwise kappa statistic would be considered as "good agreement", the created gold standard is thus reliable. As a side note, despite Judge 1 and Judge 2 having a "fair agreement" in their own sets of evaluation, this relatively lower yet sufficient kappa statistic was raised by the arbitrations of the third judge, turning it into a "good agreement".

Moving on, in evaluating the two variants of the Information Retrieval System, several metrics would be used, and to guide the parameters (i.e., k) for some metrics, a Precision-Recall vs. k graph would be first plotted with the help of functions such as pk, and rk, and using the insights from these graph, the parameters would then be set. The important evaluation metrics are:

Table 24.

Evaluation Metrics

| No. | Evaluation Metric | Description |

|---|---|---|

| 1. | Average (Precision @ k) |